Artificial intelligence is no longer just about crunching numbers or automating tasks. It is becoming something more intuitive, more responsive, and more capable of understanding the world as we do. That shift is being driven by a blend of technologies working quietly in the background Machine Learning and Deep Learning, NLP and Text Analytics, Computer Vision and Image AI, and the often overlooked but essential work of Data Engineering and Labeling.

These tools are helping machines not just process information, but interpret it. And as they do, they are becoming better at engaging with us in ways that feel less mechanical and more meaningful.

Learning from Data the Human Way

Machine Learning and Deep Learning are the foundation of modern AI. They allow systems to learn from experience, improve over time, and make decisions based on patterns rather than fixed rules. Deep Learning, in particular, mimics the way our brains process information layer by layer, connection by connection.

This is what enables a recommendation engine to suggest a movie you might actually enjoy, or a medical system to flag a condition that might otherwise go unnoticed. These models are not just powerful they are adaptive. And that adaptability is what makes them feel more human.

Teaching Machines to Read Between the Lines

Language is messy. It is full of nuance, emotion, and context. Natural Language Processing and Text Analytics are the tools that help machines make sense of it all. They allow AI to read, summarize, translate, and even detect sentiment in written and spoken communication.

Whether it is a chatbot responding to a customer query or a system analyzing thousands of reviews to spot trends, NLP is what makes those interactions feel less robotic. It is not just about understanding words it is about understanding meaning.

And as these systems get better at grasping tone, intent, and cultural context, they become more capable of holding conversations that feel natural and respectful.

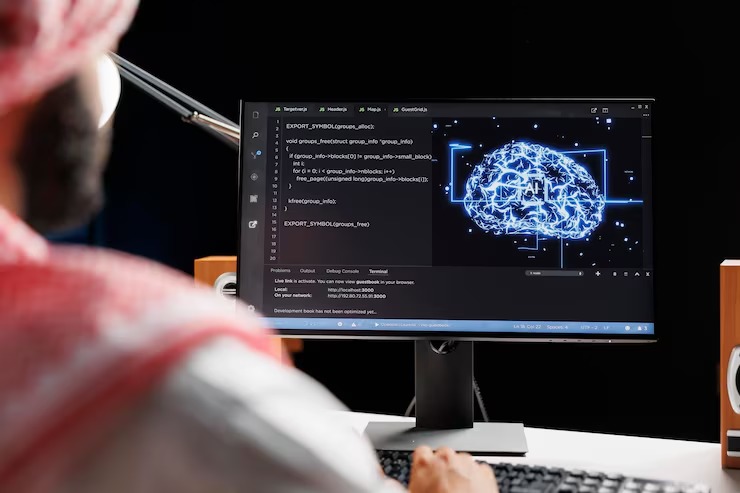

Giving Sight to Algorithms

While language helps machines understand what we say, Computer Vision and Image AI help them understand what we see. These technologies allow systems to recognize faces, objects, gestures, and scenes. They are used in everything from security cameras and medical imaging to autonomous vehicles and augmented reality.

But the real magic happens when these systems go beyond recognition and begin to interpret. A machine that can detect fatigue in a driver or identify early signs of disease in a scan is not just seeing it is understanding.

And that understanding opens the door to more responsive, empathetic technology.

The Invisible Work Behind Smart AI

None of this would be possible without the quiet, meticulous work of Data Engineering and Labeling. These processes ensure that the data feeding AI systems is clean, organized, and meaningful. Data engineering involves building pipelines that collect and prepare information. Labeling adds context—marking images, tagging text, and helping models learn what matters.

It is often a human task, requiring judgment and care. Whether it is identifying sarcasm in a tweet or labeling a tumor in a scan, this work helps machines learn with nuance.

And while it may not get the spotlight, it is the backbone of every intelligent system.

Where These Technologies Are Already Making a Difference

Across industries, these technologies are quietly transforming how things work:

- In healthcare, AI is helping doctors diagnose faster and more accurately

- In finance, it is spotting fraud and guiding investment decisions

- In retail, it is personalizing shopping experiences and streamlining logistics

- In education, it is adapting lessons to individual learning styles

- In agriculture, it is monitoring crop health and predicting yields

These are not just technical improvements. They are shifts in how services are delivered, how decisions are made, and how people interact with systems.

What We Still Need to Get Right

As AI becomes more capable, it also becomes more complex. And with that complexity come challenges. Bias in data can lead to unfair outcomes. Misinterpretation of language or images can cause errors. Overreliance on automation can reduce human oversight.

Ethical AI is not just a technical goal it is a human one. It means building systems that are transparent, inclusive, and accountable. It means asking not just what AI can do, but what it should do.

Looking Ahead to More Human AI

The future of AI is not just smarter it is more sensitive. Advances in multimodal learning are allowing systems to combine text, image, and sound for deeper understanding. Prompt engineering is helping guide models toward more thoughtful and accurate responses.

We are moving toward systems that do not just respond they relate. That means AI that can adapt to different cultures, languages, and emotional states. It means technology that feels less like a tool and more like a companion.

And it means continuing to build with empathy, curiosity, and care.

Wrapping It All Together

Artificial intelligence is learning to see, listen, and understand. Through Machine Learning and Deep Learning, NLP and Text Analytics, Computer Vision and Image AI, and the foundational work of Data Engineering and Labeling, we are teaching machines to engage with the world in ways that feel more human.

In the midst of all this complexity, there are teams quietly working to make these technologies feel more grounded and usable. Ment Tech, for instance, has been exploring how to bring together machine learning, text analytics, computer vision, and data engineering in ways that actually serve people. Rather than building isolated tools, they focus on stitching these capabilities into systems that respond to real-world needs whether that means helping a chatbot understand tone or enabling a model to interpret both images and text in context. It is less about flashy innovation and more about thoughtful integration.

Leave a comment